k8s基础5-service服务

k8s基础5-service服务

Kubernetes 中的 Service(服务) 提供了这样的一个抽象层,它选择具备某些特征的 Pod(容器组)并为它们定义一个访问方式。Service(服务)使 Pod(容器组)之间的相互依赖解耦(原本从一个 Pod 中访问另外一个 Pod,需要知道对方的 IP 地址)。一个 Service(服务)选定哪些 Pod(容器组) 通常由 LabelSelector(标签选择器) 来决定。

在创建Service的时候,通过设置配置文件中的spec.type字段的值,可以以不同方式向外部暴露应用程序:

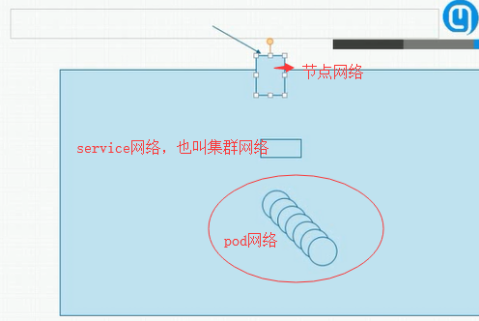

一 Kubernetes集群里有3种IP地址,分别如下

Node IP:Node节点的IP地址,即物理网卡的IP地址。

Pod IP:Pod的IP地址,即docker容器的IP地址,此为虚拟IP地址。

Cluster IP:Service的IP地址,此为虚拟IP地址

Loadbalancer 负载均衡器

三种IP网络间的通信

service地址和pod地址在不同网段,service地址为虚拟地址,不配在pod上或主机上,外部访问时,先到Node节点网络,再转到service网络,最后代理给pod网络

二 NodePort介绍

使用 NAT 在集群中每个的同一端口上公布服务。这种方式下,可以通过访问集群中任意节点+端口号的方式访问服务 :。此时 ClusterIP 的访问方式仍然可用。

可以是物理机的IP(也可能是虚拟机IP)。每个Service都会在Node节点上开通一个端口,外部可以通过NodeIP:NodePort即可访问Service里的Pod,和我们访问服务器部署的项目一样,IP:端口/项目名

2 在kubernetes查询NodeIP

1.kubectl get nodes

2.kubectl describe node nodeName

3.显示出来的InternalIP就是NodeIP

[root@master1 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master1 Ready master 2d v1.17.4

master2 Ready master 2d v1.17.4

master3 Ready master 2d v1.17.4

node1 Ready <none> 43h v1.17.4

node2 Ready <none> 43h v1.17.43 查看master2节点

[root@master1 ~]# kubectl describe node master2

Name: master2

Roles: master

Labels: beta.kubernetes.io/arch=amd64

beta.kubernetes.io/os=linux

kubernetes.io/arch=amd64

kubernetes.io/hostname=master2

kubernetes.io/os=linux

node-role.kubernetes.io/master=

Annotations: kubeadm.alpha.kubernetes.io/cri-socket: /var/run/dockershim.sock

node.alpha.kubernetes.io/ttl: 0

projectcalico.org/IPv4Address: 192.168.0.43/24

projectcalico.org/IPv4IPIPTunnelAddr: 10.100.180.0

volumes.kubernetes.io/controller-managed-attach-detach: true

CreationTimestamp: Wed, 15 Apr 2020 10:03:11 +0800

Taints: node-role.kubernetes.io/master:NoSchedule

Unschedulable: false

Lease:

HolderIdentity: master2

AcquireTime: <unset>

RenewTime: Fri, 17 Apr 2020 10:23:58 +0800

Conditions:

Type Status LastHeartbeatTime LastTransitionTime Reason Message

---- ------ ----------------- ------------------ ------ -------

NetworkUnavailable False Wed, 15 Apr 2020 10:12:18 +0800 Wed, 15 Apr 2020 10:12:18 +0800 CalicoIsUp Calico is running on this node

MemoryPressure False Fri, 17 Apr 2020 10:21:24 +0800 Wed, 15 Apr 2020 10:03:10 +0800 KubeletHasSufficientMemory kubelet has sufficient memory available

DiskPressure False Fri, 17 Apr 2020 10:21:24 +0800 Wed, 15 Apr 2020 10:03:10 +0800 KubeletHasNoDiskPressure kubelet has no disk pressure

PIDPressure False Fri, 17 Apr 2020 10:21:24 +0800 Wed, 15 Apr 2020 10:03:10 +0800 KubeletHasSufficientPID kubelet has sufficient PID available

Ready True Fri, 17 Apr 2020 10:21:24 +0800 Wed, 15 Apr 2020 10:09:42 +0800 KubeletReady kubelet is posting ready status

Addresses:

InternalIP: 192.168.0.43

Hostname: master2

Capacity:

cpu: 2

ephemeral-storage: 17394Mi

hugepages-1Gi: 0

hugepages-2Mi: 0

memory: 3861348Ki

pods: 110

Allocatable:

cpu: 2

ephemeral-storage: 16415037823

hugepages-1Gi: 0

hugepages-2Mi: 0

memory: 3758948Ki

pods: 110

System Info:

Machine ID: 702125e0b8574c56aad97aaa4a15f2a2

System UUID: 9DEA4D56-7E9B-AC0E-391C-1648D6DF739C

Boot ID: fb50b217-86da-4059-a746-f4b30d91aa4b

Kernel Version: 3.10.0-1062.18.1.el7.x86_64

OS Image: CentOS Linux 7 (Core)

Operating System: linux

Architecture: amd64

Container Runtime Version: docker://18.9.7

Kubelet Version: v1.17.4

Kube-Proxy Version: v1.17.4

PodCIDR: 10.100.1.0/24

PodCIDRs: 10.100.1.0/24

Non-terminated Pods: (7 in total)

Namespace Name CPU Requests CPU Limits Memory Requests Memory Limits AGE

--------- ---- ------------ ---------- --------------- ------------- ---

kube-system calico-node-js8xp 250m (12%) 0 (0%) 0 (0%) 0 (0%) 2d

kube-system etcd-master2 0 (0%) 0 (0%) 0 (0%) 0 (0%) 2d

kube-system kube-apiserver-master2 250m (12%) 0 (0%) 0 (0%) 0 (0%) 2d

kube-system kube-controller-manager-master2 200m (10%) 0 (0%) 0 (0%) 0 (0%) 2d

kube-system kube-proxy-66jw4 0 (0%) 0 (0%) 0 (0%) 0 (0%) 2d

kube-system kube-scheduler-master2 100m (5%) 0 (0%) 0 (0%) 0 (0%) 2d

kube-system kuboard-756d46c4d4-dl279 0 (0%) 0 (0%) 0 (0%) 0 (0%) 44h

Allocated resources:

(Total limits may be over 100 percent, i.e., overcommitted.)

Resource Requests Limits

-------- -------- ------

cpu 800m (40%) 0 (0%)

memory 0 (0%) 0 (0%)

ephemeral-storage 0 (0%) 0 (0%)

Events: <none>

三 PodIP

1 Pod IP

Pod IP是每个Pod的IP地址,他是Docker Engine根据docker网桥的IP地址段进行分配的,通常是一个虚拟的二层网络

同Service下的pod可以直接根据PodIP相互通信

不同Service下的pod在集群间pod通信要借助于 cluster ip

pod和集群外通信,要借助于node ip

2 在kubernetes查询Pod IP**

[root@master1 ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-deployment-55fbd9fd6d-wct2t 1/1 Running 0 42h[root@master1 ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-deployment-55fbd9fd6d-wct2t 1/1 Running 0 42h

[root@master1 ~]# kubectl describe pod nginx-deployment-55fbd9fd6d-wct2t

Name: nginx-deployment-55fbd9fd6d-wct2t

Namespace: default

Priority: 0

Node: node1/192.168.0.142

Start Time: Wed, 15 Apr 2020 16:11:45 +0800

Labels: app=nginx

pod-template-hash=55fbd9fd6d

release=testnginx1

Annotations: cni.projectcalico.org/podIP: 10.100.166.131/32

Status: Running

IP: 10.100.166.131

IPs:

IP: 10.100.166.131

Controlled By: ReplicaSet/nginx-deployment-55fbd9fd6d

Containers:

nginx:

Container ID: docker://b14b64854efa4708a455a855993f43a7c1ce3fa52cb9ca9cb3948ac6138ebe8b

Image: nginx:1.7.9

Image ID: docker-pullable://nginx@sha256:e3456c851a152494c3e4ff5fcc26f240206abac0c9d794affb40e0714846c451

Port: <none>

Host Port: <none>

State: Running

Started: Wed, 15 Apr 2020 16:11:47 +0800

Ready: True

Restart Count: 0

Environment: <none>

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from default-token-2f42g (ro)

Conditions:

Type Status

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

default-token-2f42g:

Type: Secret (a volume populated by a Secret)

SecretName: default-token-2f42g

Optional: false

QoS Class: BestEffort

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute for 300s

node.kubernetes.io/unreachable:NoExecute for 300s

Events: <none>四 ClusterIP

1 ClusterIP(默认)

在群集中的内部IP上公布服务,这种方式的 Service(服务)只在集群内部可以访问到

Cluster IP是一个虚拟的IP,但更像是一个伪造的IP网络,原因有以下几点

Cluster IP仅仅作用于Kubernetes Service这个对象,并由Kubernetes管理和分配P地址

Cluster IP无法被ping,他没有一个“实体网络对象”来响应

Cluster IP只能结合Service Port组成一个具体的通信端口,单独的Cluster IP不具备通信的基础,并且他们属于Kubernetes集群这样一个封闭的空间。

在不同Service下的pod节点在集群间相互访问可以通过Cluster IP

五 LoadBalance

在云环境中(需要云供应商可以支持)创建一个集群外部的负载均衡器,并为使用该负载均衡器的 IP 地址作为服务的访问地址。此时 ClusterIP 和 NodePort 的访问方式仍然可用。

Service是一个抽象层,它通过 LabelSelector 选择了一组 Pod(容器组),把这些 Pod 的指定端口公布到到集群外部,并支持负载均衡和服务发现。

公布 Pod 的端口以使其可访问

在多个 Pod 间实现负载均衡

使用 Label 和 LabelSelector

六 targetPort

容器的端口(最根本的端口入口),与制作容器时暴露的端口一致(DockerFile中EXPOSE),

例如docker.io官方的nginx暴露的是80端口。

docker.io官方的nginx容器的DockerFile参考https://github.com/nginxinc/docker-nginx

- 感谢你赐予我前进的力量